Enterprise Data Platform with Databricks, Part 2

Introduction

Welcome back to our blog series on building modern enterprise data platforms!

In Part 1 of our series, we explored the strategic and architectural foundations of a future-proof cloud data platform. Today, we’ll dive deeper into the technical implementation of the first and most important step: Ingestion.

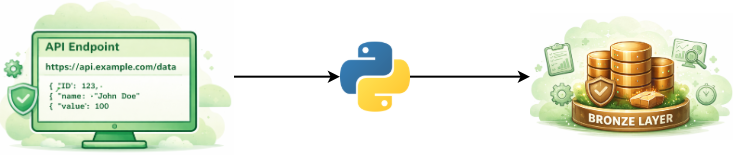

In the traditional enterprise world, powerful but inflexible data ingestion tools are often used. In contrast, when building modern cloud platforms, we prioritize maximum flexibility and scalability by combining the Python standard library’s requests module with the computational power of Apache Spark.

Visualization created with the help of AI (Gemini)

Ingestion

Many enterprise APIs require complex logic for authentication, error handling, and pagination. To address these challenges and gain full control over API calls, we leverage the flexibility of Python. The benefits are clear:

- Smart Pagination: Metadata such as X-Pages can be dynamically retrieved from the API headers. This allows the exact amount of data to be determined and the request for subsequent pages to be precisely controlled.

- Tailored Resilience: Robust error handling can be implemented directly in the code. We strictly distinguish between temporary errors (e.g., 503: Service unavailable), which trigger automatic retry logic, and critical errors (e.g., 401: Unauthorized), which trigger a clean termination and an alert.

- Open Source & Cost Efficiency: By using Python, we are opting for open source rather than vendor-locked licensing models in this case. There are no additional costs associated with third-party tools, meaning that the only expenses incurred are the existing compute costs within Databricks. Additionally, we avoid vendor lock-in: the ingestion code is portable, transparent, and remains entirely under our control.

The technological bridge is built by converting the short-lived API data into Databricks' distributed architecture. We convert the JSON data into a Spark DataFrame, making the information available for parallel processing on the cluster.

In this step of the process, we also leverage Spark’s flexibility for minimal technical processing - such as adding an ingested_at timestamp, cleaning up field names, converting the types of critical attributes, or filtering out obvious duplicates - to provide a valid data foundation for the subsequent business logic right from the Bronze layer.

The Spark DataFrame is ultimately persisted in Delta format within the Bronze layer, the modern standard for enterprise lakehouses. Unlike traditional file formats, Delta Lake offers full ACID transaction safety: write operations are either fully successful or are completely rolled back in the event of errors, providing essential protection against data corruption.

A key advantage of the Delta format is the Time Travel feature, which enables seamless data archiving and allows us to access past data states at any time. In addition, query performance is significantly optimized through automated indexing.

Outlook

The raw data is now safely stored in the Bronze Layer, but how do we turn it into refined, business-ready information?

In the upcoming third part of our series, we’ll focus on medallion architecture using dbt (Data Build Tool). We’ll show you how we implement data transformations in the Silver and Gold layers in an agile and transparent manner, using the latest software engineering best practices (such as version control and automated testing).

Stay tuned!